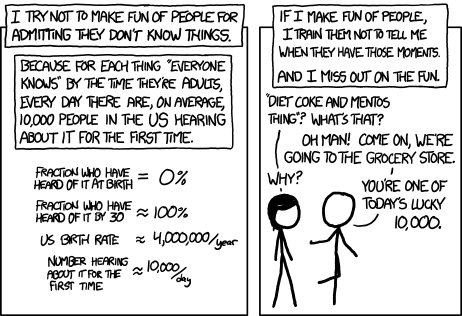

To quote Randall Monroe: “Saying ‘what kind of an idiot doesn’t know about the Yellowstone supervolcano’ is so much more boring than telling someone about the Yellowstone supervolcano for the first time.”

In astronomy education, we spend a lot of time saying “what kind of idiot doesn’t know about lunar phases.” I think it’s time to ask ourselves why we get upset that people (especially kids!) don’t understand things we haven’t yet taught them, and how we can make it ok for people to say “I don’t know” instead of BSing bad answers.

I’m currently attending The International Symposium on Education in Astronomy and Astrobiology (#ise2a) in Utrecht, the Netherlands. Over the weekend, I attended the Psychology of Programming Interest Group (#ppig2017) meeting in Delft, the Netherlands. While the one meeting uses the modifier “Education” and the other uses the modifier “Psychology” they both had largely overlapping content, with talks addressing: barriers to learning; educational frameworks and progressions; and ways of determining expertise.

There were/are two major differences between these conferences.

- The kinds of interdisciplinary engagement is not the same. At the computer science conference (N ~ 25) there was an amazing mixture of CS professors, industry programmers, neuroscientists, psychologists, and people focused on education – there didn’t seem to be any clear majority. At the astronomy conference (N ~ 80) we have astro professors and people with astronomy and education degrees who are focused on education and communication, and who work in research centers or schools. The majority of attendees are people with astronomy degrees at some level.

- The way novice/new learners are discussed is very different. In computer science, the focus was on transforming blank slates and novices into experts. In astronomy, people start from a point of concern about misconceptions, and actively pre-test for knowledge but get frustrated with the wrong answers on the pre-tests.

I’m going to only briefly touch on the first topic: It was amazing to watch what discussions arose from having psychologists, neuroscientists, and content experts all discussing education together. More of this please! (Also, I now want to stick people in MRIs and watch them learn.)

Moving on…

One of the things I’ve been thinking about (and am still thinking about), is why does CS start from “Folks are blank slates” while astronomy starts from “People have lots of misconceptions that need measured and fixed”?

At the most simplistic level, all little kids are interested in space and dinosaurs, and get exposed to the sky above them and what they can read in books and see on TV. They both absorb information (and misinformation) while bombarding people with “Why” questions that may or may not get correctly answered. Kids/people try to piece together their own gut level understanding of their place in space the same way they piece together ideas like “if you let go of an object, it will fall.” Put simply, kids (who become adults) stitch together a patchwork of factoids, inferences, and (right and wrong) answers to questions to build an understanding of their reality.

Kids (who become adults), don’t generally develop misconceptions about programming algorithms and when to use ‘for loops’ vs ‘while loops’ while using a computer or mobile device. They do often think that writing software must be hard (but they think astronomy must be hard too!). While thinking something is hella hard effects learning, (IIRC – I need to do a literature review of this) it is easier to overcome “hella hard” than misinformation and misconceptions. (Again, I need to verify this with the literature.)

One concern I have about this simplistic model is that the focus on misconceptions in astronomy may be leading to bad results because our methodology isn’t careful to separate lack of knowledge from false knowledge. This may in turn be effecting our understanding of our learners in negative ways that cause us to focus on “fixing” learners, instead of focusing on transforming new/novice learners into experts. If we could focus, as computer science does, on teaching information to blank slates whom we can get learning while doing, while skipping all the “let me fix this misconception…” that would be a beautiful thing.

Pre-testing is a (necessary?) evil. A student faced with a pre-test that asks them questions about things they don’t know is going to do their best to please, and will use their existing experience to pull an answer out of thin air (or, as we often say when grading, the kid is going to try and bullshit their way through the questions). This is consistent with Piaget’s theory of learning, which focuses on how humans construct their own mental models of our world that are shaped and reshaped by our experiences. With a lack of complete experiences and knowledge, students can’t build an accurate mental model and can’t accurately answer questions. When we ask kids to complete a pre test and we don’t say “Leave this blank if you don’t know”, we require students to extrapolate beyond their understanding.

According to Descarte, sin originates from acting beyond knowledge. In a pre-testing, leave-nothing-blank, scenerio, we are demanding people answer questions beyond their knowledge. I wouldn’t say we’re asking them to sin, but perhaps we are “sinning” when we are upset with their lack of knowledge that appears as misconceptions.

Over my career, I have over and over and over seen education researchers present the ridiculous answers that learners have written on pre-tests as evidence of how mis-informed the public (and kids!) are. Over and over and over, researchers have scorned all the learners who, prior to being taught, wrote that the phases of the moon must be caused by the Earth’s shadow. This is punching down (here’s a good essay on the topic re: education ); this is mocking people for trying to use their experiences to problem solve what they weren’t taught. We need to stop doing this (and encourage problem solving with testing).

One thing computer science has that I want to find a way to steal for other disciplines is the idea of unit testing. Each piece of code can have a test developed to make sure the answer (=algorithm) being proposed is actually right. I guess at code syntax all the time, and I (because I don’t use compiled languages) usually know in about 5 seconds if guessed wrong. I don’t get a chance to build misconceptions about how code works, because if I’m wrong, my unit tests fail, and my code doesn’t get the chance to go spectacularly sideways. I don’t know how to bring this “test test test” mentality to astronomy other than to say, “Dear Student, it’s ok to say I don’t know, but I would guess” and to then test your guess against the answer listed in a respectable source.

When we focus on pre-tests, we are also creating a bad starting point educationally.

First of all, we know from research that the mere act of mentioning a misconception can reinforce a misconception. If you tell people who don’t know a misconception, “It is a misconception to think that vaccines are dangerous,” you may even seed them with doubts and ideas. They will walk away hearing, “There are folks who think vaccines are dangerous.” They will walk away asking, “Who are you to declare vaccines are safe!” They may even decide, “This is complex, and I am not an expert, and rather than try and understand, I’m just going to say la la la la la and not deal with vaccines at all.”

Second, when you say or imply, “We’re doing this pre-test to see what misunderstandings you already have,” you are essentially saying, “You have a lot of misunderstanding.” Research shows that if prior to a test you tell women or minorities that they are worse at some subject than their white / male peers, they will do worse. I think we need to see if we are biasing pre-test outcomes with how we explain what we’re doing. I now want someone to study, if you say, “We are looking to see what you know so we don’t bore you with explaining things you already understand” if you will get different results than if you say “We are looking for misconceptions and weaknesses we need to address.”

Finally, there is a fundamental difference between someone not knowing but doing their best to answer a question in an academic setting (e.g. BS’ing an answer) and someone having a misconception they are confident is right. I suspect all of us at some point have confidently written out an answer we were certain was right only to … be very very wrong. I suspect many of us have also, with a fair amount of self-loathing, desperately tried to write down enough vague words and best guesses that maybe we’d get some points even though we had absolutely no idea what the answer should be. The difference between not knowing and “knowing” the wrong answer is taken into consideration with many standard tests in the US (such as the SATs and GREs for college and graduate school). In these instances, points are added for right answers, fractional points are deducted for wrong answers, and nothing happening when answers are omitted.

When we look at what a student has BS’d and claim “they have a misconception” and wring our hands, what we are doing is 1) not giving the kid credit for trying to problem solve, and 2) mistaking ignorance for misunderstanding.

If we then mock the ridiculousness of the guess a student made, we are punching down instead of lifting up. Education should be only about lifting up. Kids are cruel enough to each other. It is our job to be the light.

So what can we do? How can we do better?

First of all, we must be consistently thoughtful in how we do our pre-testing. We must try to distinguish between common wild guesses and common misunderstandings that people are confident are right. We should consider asking students to state how confident they are in their answers (and tell them “not confident” is ok). If testing fatigue is a concern, then we should encourage students to leave answers blank instead of writing down guesses.

And, to say it again, we should never punch down.

If it is a common misconception that people strongly think the seasons are due to the Earth’s changing distance from the Sun, and they are confident in this wrong answer, then we should never make fun of this. We should ask, “Where do they gain confidence in this bad information?” We should look to cut things off at the source, and if the source is students confidently thinking their assumption must be true… Well, maybe we need to learn from computer science and find our own version of unit testing. Maybe we need to work to teach questioning, and the importance of verifying our inferences and checking our data. Isn’t that better than trying to get every possible concept into every child’s head as young as possible?

It’s ok not to know things. It’s amazing to learn new things. It’s even more amazing to have the tools that are needed to explore our world as problem solvers and test and verify our answers.

And I still want to stick someone in an MRI machine and watch them learn.

header photo by arbyreed

I like the idea of explaining the pretests being for the sake of not boring students with stuff they already know. It sounds like it would make it less intimidating. I imagine it might also be useful to ask on a pre-test if there are any topics the student is interested in or already knows about.

Excellent article.

Typically people judge the thing they do not understand.

http://www.drywallsanluisobispo.com

Ignorance is not an excuse. I might say laziness would be the proper way to call it.

http://www.landscapermodesto.com

Nice post! Thanks for sharing this awesome article. https://www.inbodiedwellness.com/

learning new skills is always fun. You will never know when would be useful.

learning new skills is always fun. You will never know when would be useful.

local concrete contractors teanek

Experience in life is the best teacher. We should always learn in life. Upskilling is always the key to success.

we buy junk cars ny

Exactly! Just because you know it doesn’t mean it has been known to everyone. People who easily wrong other people are really terrible.

Pool companies in Austin

Ignorance is not bliss. In fact, it can be quite the opposite. Ignorance can lead to a life of carelessness and even starvation.

Recommended: https://www.mobilewindowtintingphoenix.com/ (window tinting)

Ignorance is often seen as an inescapable part of life, and it can lead to negative outcomes if left untreated. It can manifest itself in many forms, from making bad choices due to a lack of knowledge or understanding, to blindly following the opinions and advice of others without considering the consequences. Ignorance can also result in a lack of awareness of and understanding of the world around us.

By the way, visit this website for the best flooring services in Mesa, Az.

I would like to add my input regarding this topic by leaving you guys with this quote by Donny Miller: “In the age of information, ignorance is a choice.”. Oftentimes, other people are just lazy to learn something new every day, some were not even interested to gain more knowledge because of endless scrolling through mindless things on the internet.

To anyone interested, please visit our website at marketablegrowth.com/kamloops-website-design.

Thank you for sharing this insight.

https://www.ohioheartbeatcpr.com/